We have been featuring various theories about digital information management on this blog in order to highlight some of the debates involved in this complex and evolving field.

To offer a different perspective to those that we have focused on so far, take a moment to consider the principles of Parsimonious Preservation that has been developed by the National Archives, and in particular advocated by Tim Gollins who is Head of Preservation at the Institution.

In some senses the National Archives seem to be bucking the trend of panic, hysteria and (sometimes) confusion that can be found in other literature relating to digital information management. The advice given in the report, ‘Putting Parsimonious Preservation into Practice‘, is very much advocating a hands-off, rather than hands-on approach, which many other institutions, including the British Library, recommend.

The principle that digital information requires continual interference and management during its life cycle is rejected wholesale by the principles of parsimonious preservation, which instead argues that minimal intervention is preferable because this entails ‘minimal alteration, which brings the benefits of maximum integrity and authenticity’ of the digital data object.

As detailed in our previous posts, cycles of coding and encoding pose a very real threat to digital data. This is because it can change the structure of the files, and risk in the long run compromising the quality of the data object.

Minimal intervention in practice seems here like a good idea – if you leave something alone in a safe place, rather than continually move it from pillar to post, it is less likely to suffer from everyday wear and tear. With digital data however, the problem of obsolescence is the main factor that prevents a hands-off approach. This too is downplayed by the National Archives report, which suggests that obsolescence is something that, although undeniably a threat to digital information, it is not as a big a worry as it is often presented.

Gollins uses over ten years of experience at the National Archives, as well as the research conducted by David Rosenthal, to offer a different approach to obsolescence that takes note of the ‘common formats’ that have been used worldwide (such as PDF, .xls and .doc). The report therefore concludes ‘that without any action from even a national institution the data in these formats will be accessible for another 10 years at least.’

10 years may seem like a short period of time, but this is the timescale cited as practical and realistic for the management of digital data. Gollins writes:

‘While the overall aim may be (or in our case must be) for ―permanent preservation […] the best we can do in our (or any) generation is to take a stewardship role. This role focuses on ensuring the survival of material for the next generation – in the digital context the next generation of systems. We should also remember that in the digital context the next generation may only be 5 to10 years away!’

It is worth mentioning here that the Parsimonious Preservation report only includes references to file extensions that relate to image files, rather than sound or moving images, so it would be a mistake to assume that the principle of minimal intervention can be equally applied to these kinds of digital data objects. Furthermore, .doc files used in Microsoft Office are not always consistent over time – have you ever tried to open a word file from 1998 on an Office package from 2008? You might have a few problems….this is not to say that Gollins doesn’t know his stuff, he clearly must do to be Head of Preservation at the National Archives! It is just this ‘hands-off, don’t worry about it’ approach seems odd in relation to the other literature about digital information management available from reputable sources like The British Library and the Digital Preservation Coalition. Perhaps there is a middle ground to be struck between active intervention and leaving things alone, but it isn’t suggested here!

For Gollins, ‘the failure to capture digital material is the biggest single risk to its preservation,’ far greater than obsolescence. He goes on to state that ‘this is so much a matter of common sense that it can be overlooked; we can only preserve and process what is captured!’ Another issue here is the quality of the capture – it is far easier to preserve good quality files if they are captured at appropriate bit rates and resolution. In other words, there is no point making low resolution copies because they are less likely to survive the rapid successions of digital generations. As Gollins writes in a different article exploring the same theme, ‘some will argue that there is little point in preservation without access; I would argue that there is little point in access without preservation.’

This has been bit of a whirlwind tour through a very interesting and thought provoking report that explains how a large memory institution has put into practice a very different kind of digital preservation strategy. As Gollins concludes:

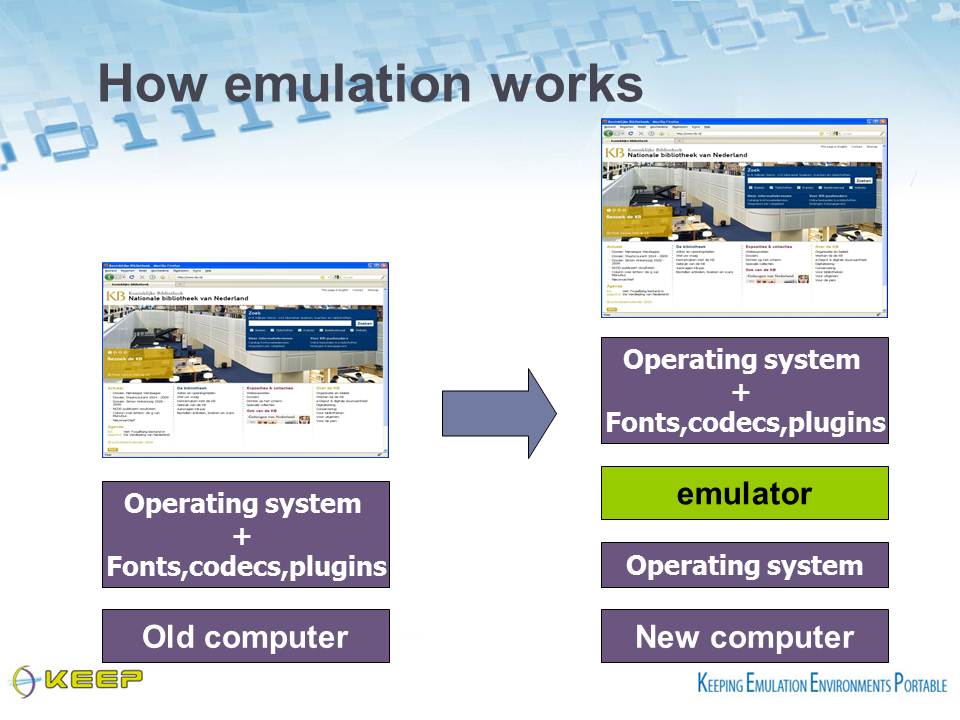

‘In all of the above discussion readers familiar with digital preservation literature will perhaps be surprised not to see any mention or discussion of “Migration” vs. “Emulation” or indeed of ―“Significant Properties”. This is perhaps one of the greatest benefits we have derived from adopting our parsimonious approach – no such capability is needed! We do not expect that any data we have or will receive in the foreseeable future (5 to 10 years) will require either action during the life of the system we are building.’

Whether or not such an approach is naïve, neglectful or very wise, only time will tell.